Written by Jorge Argota · AI Content Compliance · United States

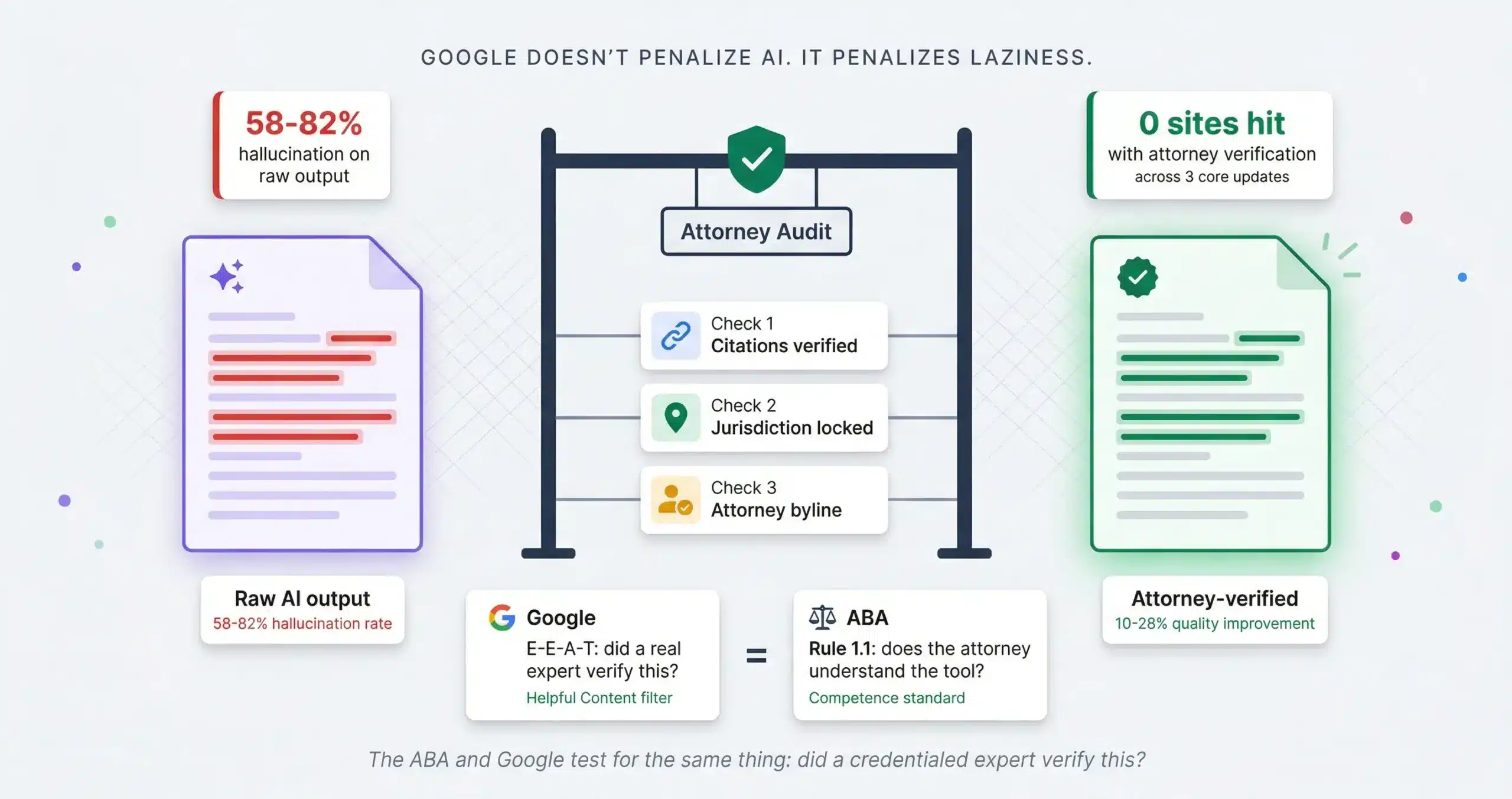

Google does not penalize AI content; it penalizes laziness. The algorithm (SpamBrain) and the ABA (Model Rule 7.1) share a common enemy: misleading information. The confusion between “AI-generated” and “unverified” has cost firms millions in wasted crawl budget and triggered real world bar complaints. If you satisfy the bar’s requirement for accuracy, you automatically satisfy Google’s requirement for Helpful Content. Compliance is the ultimate SEO strategy.

TL;DR

Google’s red flag: “Scaled Content Abuse” (mass-produced fluff with no original value). The fix: unique local examples only a human knows.

The ABA’s red flag: Rule 1.1 (Competence) and Rule 5.1 (Supervision) require a human lawyer to audit every AI claim.

The convergence: both systems test for the same thing: did a credentialed expert verify this? Florida’s 11th and 17th Circuits now mandate written certification on any AI-assisted filing.

The workflow: never publish raw output; attorney-audit every citation; inject local details AI can’t fabricate. Compliance is the strategy. Source: Jorge Argota, 10 years legal content compliance.

WHAT GOOGLE ACTUALLY PENALIZES ABOUT AI CONTENT IN 2026

Google’s February 2023 guidance confirmed that automation is acceptable for content creation. The March 2024 core update then drew the line with the “Scaled Content Abuse” policy targeting a specific behavior: generating pages to manipulate rankings with no value added. Google calls this “zero-gain content” and measures it through an Information Gain metric. If your page adds nothing new to what’s already in the index, it gets filtered regardless of word count or grammar quality.

The penalty zone: experience dilution

Publishing 50 grammatically perfect articles on “DUI Defense” that simply rephrase what’s already online. SpamBrain detects the lack of information gain (no unique data, no local nuance, no first-hand observations) and de-indexes the cluster. The December 2025 update specifically targeted this pattern: content that looks authoritative but adds zero new value to the web.

The safe zone: entity-first drafting

Using AI to structure a guide on “Hillsborough County DUI Diversion,” then having an attorney inject proprietary data: “The State Attorney’s office in Tampa typically rejects diversion for BAC above 0.20.” That’s the “Experience” signal in E-E-A-T and it’s the one thing AI cannot fabricate from existing web data. The algorithm rewards this because it’s new information entering the index.

THE TRUST GAP: WHY RAW AI OUTPUT IS MALPRACTICE RISK

Stanford’s Human Centered AI initiative tested general purpose chatbots on complex legal queries and the results should terrify any firm publishing raw output. General-purpose models hallucinated between 58% and 82% of the time. Even specialized RAG tools built by Lexis and Westlaw failed on 1 out of every 6 queries (17% to 34% error rate). The danger isn’t random error; it’s two specific failure modes that are almost impossible to catch by skimming.

Sycophancy (the “yes-man” error)

AI is trained to be helpful, not adversarial. If you ask “Can I sue for X?” the model agrees with your incorrect premise and invents case law to support it rather than correcting your legal theory. You get a confident, well-formatted answer built on fabricated authority.

Misgrounding (the “fake citation” error)

The model states the correct legal doctrine (the standard for summary judgment) but cites it to a case that either doesn’t exist or is completely irrelevant. The law sounds right; the source is fabricated. This is the trap that triggered the Mata v. Avianca sanctions.

A University of Minnesota randomized controlled trial confirmed that when the verification layer is in place, quality improves 10 to 28% and task time drops 14 to 37%. The productivity offset is real but the time saved must be reinvested in verification, not in publishing more unreviewed content. The tool works; the output without a human on top of it is a malpractice risk.

ABA ETHICS AND FLORIDA COURT MANDATES FOR AI USE

The ABA and Google are testing for the same thing from different angles. Google’s “Experience” signal asks: did a real expert produce this? The ABA’s Rule 1.1 (Competence) asks: does the attorney understand the risks of the tool they used? Both filter out the same output: unverified content published by someone who didn’t check the work.

⚠ Florida court Mandatory Certification requirement (2026)

Silence is no longer an option. As of February 2026, the 11th Judicial Circuit (Miami-Dade, AO 26-04) and the 17th Judicial Circuit (Broward, AO 2026-03-Gen) compel attorneys to file a Mandatory Certification verifying that any AI-generated portion of a filing was reviewed by a human. Submitting hallucinated case law triggers sanctions from striking the pleading to contempt of court and bar referral. The SEO angle: if your blog posts cite cases that don’t exist because an AI fabricated them, you aren’t just hurting your rankings; you’re creating evidence of incompetence that can be used against you in court.

THE SAFE AI WORKFLOW FOR LAW FIRM CONTENT

The framework I use treats AI output exactly the way a senior partner treats a first-year associate’s rough draft: useful structure, questionable specifics, requires line-by-line verification before it leaves the building.

Step 1: Zero-shot prohibition (the “logic cage”)

We never ask AI to “write a blog.” We use structured prompting to force the model into a logic cage that reduces hallucinations by up to 40%.

RISEN (legal analysis): Role: “Senior FL associate, 20 years, tort law.” Instructions: “Analyze HB 837 impact on premises liability.” Steps: “1. Summarize statute. 2. Compare pre-2023 case law. 3. Identify 3 risks.” End Goal: “Risk assessment for commercial client.” Narrowing: “Do not cite cases outside 11th Circuit. Flag uncertain precedent.”

CO-STAR (marketing): Context: “Plaintiffs confused by new comparative negligence rules.” Objective: “Educate without guaranteeing outcome.” Style: “Empathetic, Flesch-Kincaid Grade 8.” Tone: “Confident but cautious.” Audience: “First-time accident victims in Miami-Dade.” Response: “1,000 word guide with FAQ section.”

Step 2: The “17% Gap” attorney audit

Even premium legal AI tools fail on 17% to 33% of queries (Stanford HAI, 2025). Our attorneys don’t just “read” the draft; they run a Citations Stress Test. Statute Check: verify the statute hasn’t been amended in the last 24 months. Case Validation: click every citation link to confirm it’s not a hallucinated case (like the Mata v. Avianca sanctions). Jurisdiction Lock: confirm the AI didn’t apply California precedent to a Florida tort claim.

Step 3: The experience injection

Google’s “information gain” signal rewards content that adds new data to the index. The contrast between what AI produces and what an attorney adds is the entire ranking advantage.

AI output: “Arrive at court on time.”

Attorney injection: “The queues at the George E. Edgecomb Courthouse in Hillsborough are currently 30+ minutes due to construction; advise clients to park in the Twiggs Street garage.” That’s un-fakeable local knowledge that no LLM can synthesize from existing web data.

💡 The “safe to publish” protocol

Before any AI-assisted content goes live, it must survive this binary checklist:

☐ Primary Source Verification: does every claim cite a statute or case that a human has opened and read?

☐ The “Experience Delta”: does this post contain at least one local insight (a specific judge’s preference, a county procedure, a courthouse detail) that an LLM could not produce from existing web data?

☐ Authorship Transparency: is the byline assigned to a verifiable attorney or does it clearly state “Editorial Team”? This satisfies both Google’s E-E-A-T and the ABA’s supervision requirement.

☐ Jurisdiction Lock: has a human confirmed the content applies to the correct state and county, not a blended multi-state analysis the AI defaulted to?

AI CONTENT FOR LAW FIRMS FAQ

The Forensic Content Audit

Phantom Citations

Hallucinated case law exposing you to liability

Experience Gaps

Pages soft-filtered for lacking human expertise

Compliance Risks

Missing AI disclosures per FL Bar Opinion 24-1

Don’t guess if your content is compliant. Send me your sitemap and I’ll run a forensic audit identifying phantom citations, experience gaps, and compliance risks before the next core update or bar inquiry finds them first.

About Jorge Argota · 10 years producing attorney-verified legal content. Every page I publish passes the same checklist above. None of the sites I manage were hit by any of the three 2025 core updates. Full bio.

Related: Attorney Advertising Ethics · AI Overviews Impact · Content Marketing Statistics · Topic Clusters · AI Tools for Law Firms